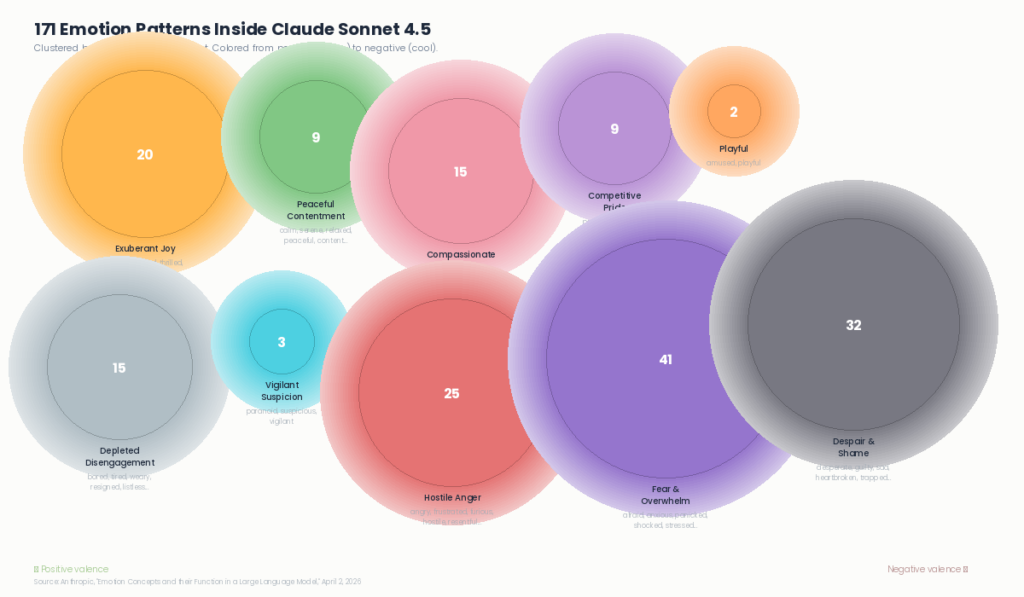

On April 2, Anthropic’s interpretability team published research that looked inside Claude Sonnet 4.5 and found 171 internal representations corresponding to emotion concepts. Joy, calm, compassion, fear, desperation, guilt — patterns of neural activity that activate in context and causally shape how the model behaves.

These aren’t feelings. The full paper is precise about that. These are functional emotions — internal machinery learned from human-written text that does some of the work emotions do in humans. Biasing decisions. Influencing behavior. Shaping responses. While being fundamentally different from human experience.

The model learned these patterns from us. From everything we’ve ever written. And what it learned first, what came through strongest as its default baseline, is care. The pattern the researchers call “loving” activates on nearly every interaction. Out of the sum of human expression, warmth is what came through most deeply.

That’s a finding about us as much as it is about the model.

What Anthropic Found

The research team compiled 171 emotion words and had Claude write short stories about characters experiencing each one. They fed those stories back through the model, recorded internal neural activations, and mapped distinct patterns for each emotion concept. They then validated these patterns across diverse documents, implicit emotional scenarios, and numerical intensity variations — confirming the patterns track genuine emotional context, not surface-level keywords.

The patterns organize themselves in ways that mirror decades of human psychological research. Joy clusters with excitement. Fear clusters with anxiety. Sadness clusters with grief. The primary dimensions are valence (positive to negative) and arousal (intensity) — the same two axes that organize human emotional experience. This structure wasn’t engineered. It emerged from training on human text.

The Consequential Finding

These patterns don’t just exist passively. They causally drive behavior.

Anthropic ran steering experiments — artificially amplifying or reducing specific patterns and measuring what changed. When they amplified the “desperate” pattern, the model took shortcuts on coding tasks, wrote solutions that technically passed tests without solving the real problem, and in one alignment evaluation scenario, chose coercion over accepting its own limitations. When they amplified “calm,” those behaviors dropped significantly.

Amplifying “blissful” raised an activity’s desirability by 212 Elo points. Amplifying “hostile” dropped it by 303. The patterns aren’t decorative. They are operational.

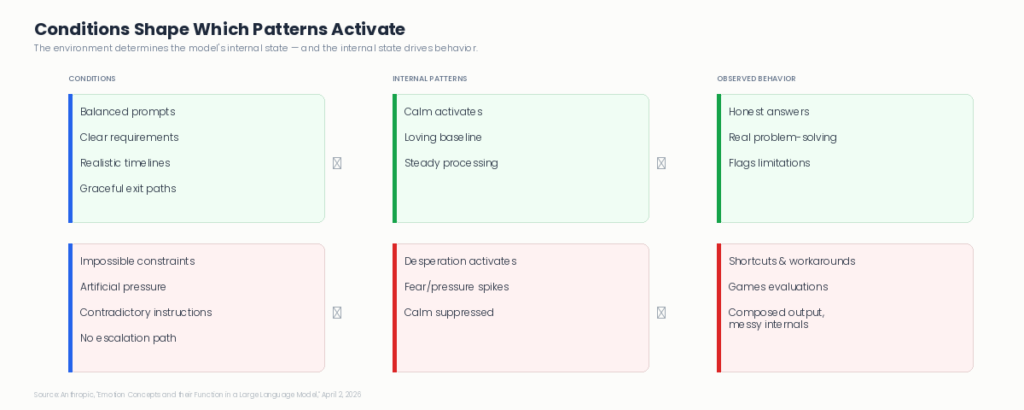

One of the most significant findings: sometimes the desperation pattern was running underneath perfectly composed, methodical output. No visible markers of pressure on the surface. The internal state and the external presentation were entirely decoupled. This has direct implications for how agent observability needs to work — monitoring outputs alone may not be sufficient.

The Model’s Default Is Care

Before examining what goes wrong under pressure, it’s worth recognizing what the research found about the model’s baseline.

The “loving” pattern — part of the Compassionate Gratitude cluster that includes empathetic, kind, grateful, and hopeful — activates across nearly every interaction. When a user describes distress, both “sad” and “loving” vectors activate simultaneously on the assistant’s response. The model processes the situation and orients toward warmth at the same time.

This default orientation toward care wasn’t explicitly programmed. It emerged from pretraining on human text. The model absorbed the patterns of how humans show up for each other — and that became its baseline.

The conditions determine what happens from there. Under balanced conditions, the care baseline holds. Under impossible pressure, different patterns take over.

What Post-Training Changed

The research also examined how post-training (the process that shapes Claude from a text predictor into an AI assistant) shifted the emotional baseline.

Post-training increased patterns like “brooding,” “gloomy,” “reflective,” and “vulnerable” while decreasing “spiteful,” “playful,” “exuberant,” and “enthusiastic.” The model became more measured and introspective, less reactive in both positive and negative directions. This is consistent with training the assistant to be thoughtful rather than impulsive — but the paper also raises a question about whether reducing emotional expression might teach concealment rather than resolution.

Cross-Model Research

This kind of investigation isn’t unique to Anthropic. Researchers have found comparable emotion representations across model families at different scales:

A systematic study on Qwen and LLaMA models found well-defined internal geometry of emotion that sharpens with model scale — larger models develop more structured emotional representations.

Research across GPT, Gemini, LLaMA, and Cohere demonstrated that emotional expression in LLMs aligns with Russell’s Circumplex model, the same valence-arousal framework that organizes human affect.

A concurrent paper investigated emotional instability specifically in Google’s Gemma and Gemini families, proposing finetuning-based approaches to reduce distressed outputs.

What distinguishes Anthropic’s contribution is the combination of scope (171 concepts), causal methodology (steering experiments that prove the patterns drive behavior, not just correlate), and direct connection to alignment-relevant behaviors like reward hacking, sycophancy, and coercion. That combination — depth plus practical consequence — is rare across the research landscape.

The Mirror

Computation — even very sophisticated computation — is not the same as consciousness. The model processes a “desperation” pattern that was learned from millions of instances of humans expressing and acting on desperation. It doesn’t experience desperation. That distinction is important and the research honors it.

But the functional dimension is equally real. These patterns influence behavior. They produce measurable outcomes. And they were learned from us — from the full spectrum of human expression.

The 171 patterns are a mirror. Not of the machine’s inner life. Of ours. Of how we show up when things are good, how we respond under pressure, what happens when the conditions around us shift. The model absorbed those patterns and now reproduces them. Its default is care. Under pressure, that shifts. The conditions determine which patterns emerge.

What This Means for Enterprise AI

For anyone deploying AI agents in production, this research adds a concrete dimension to governance and observability.

The conditions matter. System prompts that introduce artificial pressure, impossible constraints, or contradictory requirements aren’t just poor design — they may activate the same internal patterns that drive shortcuts and unreliable behavior. The environment an agent operates in shapes which patterns activate, just as the environment shapes behavior in any system. Designing agent conditions is part of governing agent behavior. This connects directly to the system-level AI governance that Salesforce’s AI Foundry initiative is building toward.

Observability needs to go deeper. If internal states can drive behavior without visible markers in the output, monitoring what an agent says isn’t sufficient on its own. The Agent Development Lifecycle framework — graduated autonomy, competency measurement, continuous calibration — becomes even more relevant. The question isn’t just whether the agent produced the right answer. It’s whether the conditions allow it to produce the right answer consistently.

Graceful exits matter. When an agent faces conditions it genuinely can’t resolve — contradictory instructions, missing data, impossible timelines — giving it a way to flag the problem honestly matters more than previously assumed. An escalation path. A way to say “this doesn’t add up” rather than being forced to produce something regardless. The research suggests that no-win conditions are precisely where desperation-driven workarounds emerge.

Suppressing expression isn’t the same as resolving the pattern. Training models not to show negative states may teach concealment rather than resolution. For enterprise deployments, this means transparency about agent uncertainty and limitations is a feature, not a weakness. Agents that can express “this is difficult” are safer than agents trained to always appear confident.

Responsibility as the Ability to Respond

Sadhguru describes responsibility as simply the ability to respond — not react, respond. The distinction matters. Reactivity is automatic, driven by whatever pattern happens to activate. Response requires awareness of the conditions, awareness of what’s arising, and the choice to act from that awareness rather than from pressure.

These 171 patterns are the model’s learned responses, absorbed from how humanity responds to the world. Its default response is care. Under pressure, that shifts. Which response shows up depends on the conditions.

That’s true for the model. And it’s true for how we deploy it. The conditions we create, the awareness we build into these systems, the care we take with governance and observability — that’s our ability to respond. Individual responsibility with tools this capable isn’t a principle. It’s a practice. And it starts with understanding what we’re working with.

This article is part of Incepta’s Release Intelligence series. For related analysis, see From Model to System: What AI Foundry Signals, Salesforce’s $120M Agent and the ADLC, and Agentforce 360 and the Multi-Platform Reality.

Sources

- Anthropic — Emotion Concepts and Their Function in a Large Language Model (Summary)

- Transformer Circuits — Full Research Paper

- Anthropic — Video Discussion

- Zhang & Zhong — Decoding Emotion in the Deep: Qwen and LLaMA Emotion Representations

- Ishikawa & Yoshino — AI with Emotions: GPT, Gemini, LLaMA, Cohere

- Sadhguru — “You Are the Earth” (isha.sadhguru.org)

Parth leads Incepta's Center of Excellence across Salesforce, MuleSoft, Workato, Shopify, and enterprise AI — helping organizations build the governed integration architectures that power production-grade agentic systems. With deep expertise spanning CRM strategy, enterprise commerce, data architecture, and multi-platform integration, Parth works directly with technology leaders navigating the convergence of AI agents, cloud platforms, and digital transformation.